The Nextjs that runs on Vercel is not the same that runs on your infrastructure

When using many of the current modern frameworks, most common usage examples assume that you’re running on some kind of serverless infrastructure.

An example node server (using Remix) that does server side rendering (SSR) using a modern framework is similar to this:

import { createRequestHandler } from "@remix-run/express";

import express from "express";

// notice that the result of `remix build` is "just a module"

import * as build from "./build/index.js";

const app = express();

app.use(express.static("public"));

// and your app is "just a request handler"

app.all("*", createRequestHandler({ build }));

app.listen(3000, () => {

console.log("App listening on http://localhost:3000");

});This is very simple and straightforward, however if you’re creating an application using either Remix or NextJS, put it inside a Docker container and run it in some kind of VM or Kubernetes environment, things won’t scale as you might think, particularly if you leverage SSR.

SSR is a CPU bound operation, meaning that if you run it on the main thread of a nodejs server, it blocks the event loop and the application will start queuing other requests while handling SSR operations.

Even when leveraging streaming, it does not solve the fundamental problem that rendering is sharing the event loop with incoming requests and callbacks. Streaming can partially unlock the event loop, if you release as each chunk is generated but for large components the render cycle will still block the event loop for a substantial amount of time.

In this situation response times will quickly start to rise when dealing with a large number of requests.

What happens when you deploy a Nextjs application to Vercel?

A good exercise to understand this is to compare what happens when you run a NextJS app on Vercel.

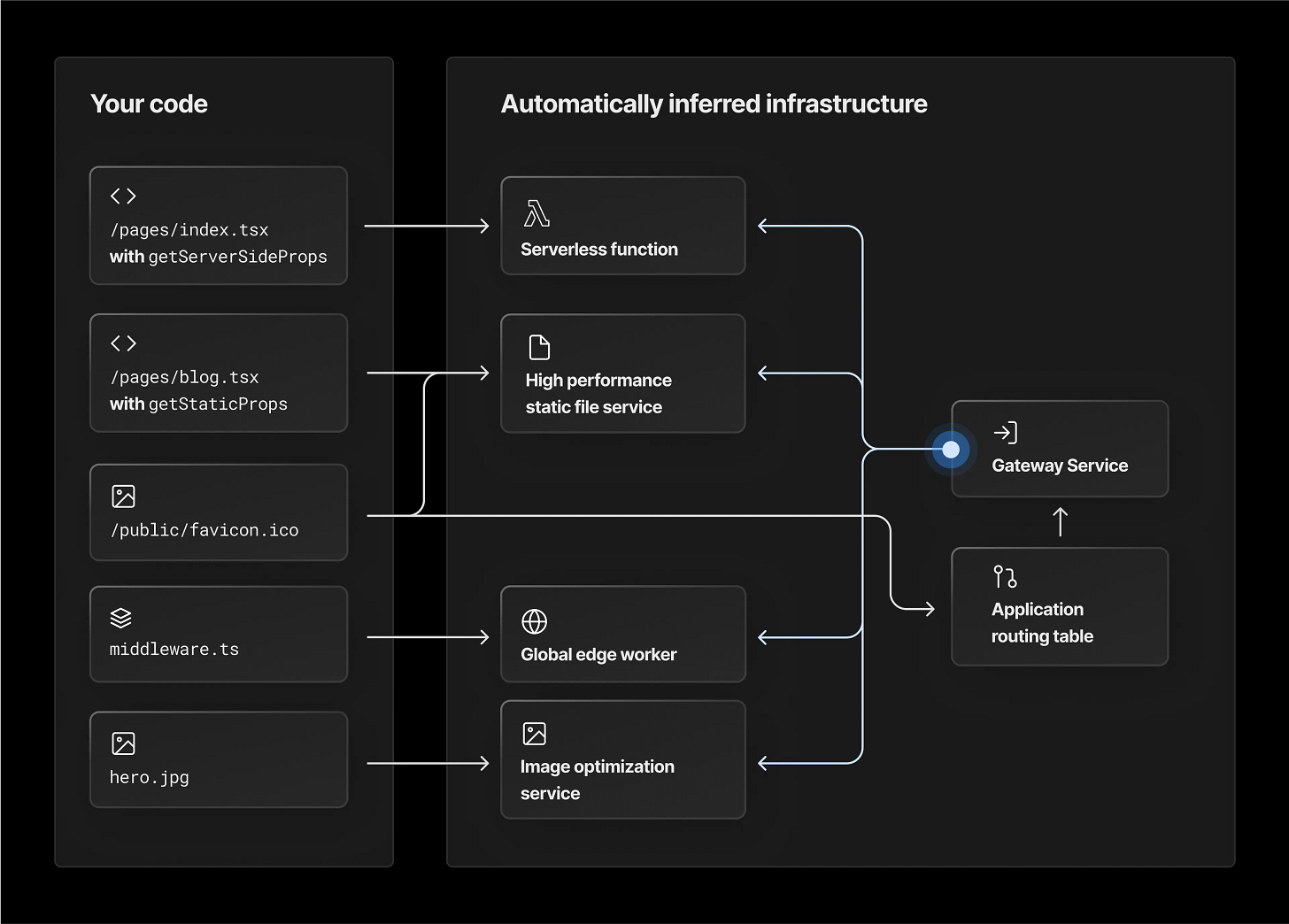

Vercel analyses the build output of your application and deploys across a mix of services relying on serverless infrastructure, they call this concept framework defined infrastructure.

As you can see your application is deployed across a range of serverless/edge functions and static hosting services, where you get one function invocation per request and you don’t have the usual nodejs event loop limitations, as you’re getting one request → one event loop.

Some projects such as OpenNext are trying to recreate this for the open source community:

OpenNext takes the Next.js build output and converts it into a package that can be deployed to any functions as a service platform. As of now only AWS Lambda is supported.

While Vercel is great, it's not a good option if all your infrastructure is on AWS. Hosting it in your AWS account makes it easy to integrate with your backend. And it's a lot cheaper than Vercel.

Next.js, unlike Remix or Astro, doesn't have a way to self-host using serverless. You can run it as a Node application. This however doesn't work the same way as it does on Vercel.

When you create a NextJS application and put it inside a docker container the node server will need to handle everything from SSR to serving static assets, and in this situation it’s very easy to flood your app with requests, start seeing requests stack up and response times degrading.

So what to do ?

If you’re using NextJS you can try using OpenNext and deploy to your cloud provider of choice (only AWS supported at the moment though) keeping the serverless model, but beware that this is a moving target and that new versions of NextJS are dependent on updates from the OpenNext community for support.

The alternative would be to put your NextJS application inside a container and deploy to an auto-scale environment such as AKS/EKS, this will however have different scaling dynamics, as your container will need to handle all the application requests meaning SSR, API routes, static assets, and so on so you may want to at least also have a CDN and/or some kind of reverse proxy on top of that.

If you’re using remix you can choose through most serverless/edge providers as this is a more open framework and there are adapters for many of the most popular providers in this space.

You can also put remix into a docker and deploy to your environment of choice but the same scaling dynamics apply here, your container will need to handle all the application requests.

You may also want to consider pricing dynamics here.

Keep in mind that serverless functions can be more expensive for applications with high traffic, and you may face concurrency limits, but they can also be more cost-effective for small-scale applications with moderate usage.

On the other hand, virtual machines may offer better performance and control over the underlying infrastructure, but they can also be more expensive for applications with low traffic requirements.

Summing up

Outside the realm of serverless your best option with either framework would be to deploy a docker container to an auto-scalable environment, this could allow you to keep things simple and not having to deal with things like node cluster or workers.

These can be tricky to deal with and for example worker threads may be hard or not possible to configure with most modern frameworks, your best option is usually node cluster or simply giving each container one cpu and let an auto-scale orchestrator scale horizontally giving you the same one request → one event loop model.

This would however have more overhead in terms of resource consumption as for example in a kubernetes environment this would lead to a high number of pods and therefore docker containers with a full node instance running simultaneously.

You would also need to carefully set and tweak the autoscale signals and number of replicas because you may end up dealing with a very large number of pods or having issues due to spikes in traffic and pods startup time.

If you need more control or a more custom setup your best option would be vite-plugin-ssr this would allow to create your own custom framework with a robust set of primitives giving you full control.